How to Manage Process Development Data Across Multiple Programs Without Losing Traceability

Running one program is manageable. Running two is where the cracks start. Running three or more is where data traceability either becomes a system — or quietly becomes a myth.

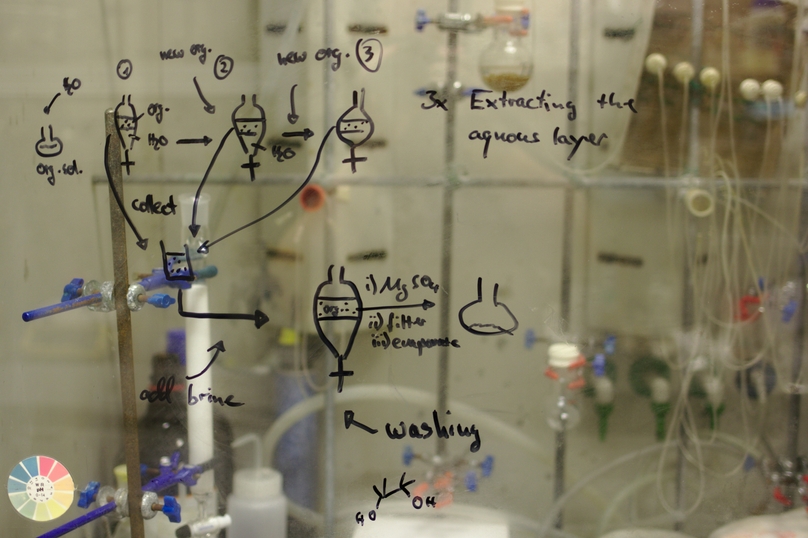

Here's a situation that comes up more than most labs want to admit. A process development team that ran one program effectively — good records, consistent conventions, decent traceability — launches a second program. They apply the same approach. It works, roughly. Then a third program starts. And somewhere in the middle of running three parallel development campaigns, often with shared equipment, shared material stocks, and scientists who are context-switching between all of them, the data management picture starts to blur.

It's not that records stop being created. Records keep getting created. The problem is that the records from Program A start looking structurally different from Program B's records, because two scientists developed slightly different habits. Material lots get used across programs without a clear log of which lot went where. An unexpected result in Program C triggers an investigation that requires tracing back through six months of shared reagent usage — and the trace breaks somewhere in the middle because nobody designed a system that could span three programs simultaneously.

This is the multi-program traceability problem. It's not exotic. It's the default experience of any process development team that scales faster than its data infrastructure.

Why multi-program environments break single-program systems

The tools and conventions that work for a single program often survive a second one through sheer effort. Scientists know the context, remember the decisions, and can mentally hold the full picture of one program's history. That cognitive load is manageable for one program. It starts to fail around two, and it's usually unsustainable at three.

The failure modes are predictable once you know what to look for.

Namespace collision. When programs share an inventory system — or worse, a spreadsheet — sample IDs, lot numbers, and experiment identifiers start to collide or get recycled across programs. "Run 047" might exist in both Program A and Program C. Material Lot B-112 might mean something different depending on which program's context you're reading it in. Cross-program queries return ambiguous results.

Convention drift. The scientist who built the Program A data structure documents upstream runs one way. The scientist who owns Program B developed a slightly different format. By the time Program C launches, there are three variations of what an upstream fermentation record looks like — none of them wrong, all of them incompatible for cross-program analysis.

Shared material opacity. In single-program labs, tracking which lot went into which experiment is relatively simple. In multi-program environments, reagents are frequently shared: the same buffer, the same enzyme lot, the same cell bank derivative goes into experiments across multiple programs. Without lot-level traceability that spans programs, a quality event touching a shared material becomes an investigation with an unbounded scope.

Tech transfer fragility. When a program moves toward clinical or manufacturing, the process development data package needs to tell a coherent story — from the first small-scale runs through process optimization to the parameters being transferred. If that history is spread across three scientists' notebooks, two spreadsheets, and a shared drive with inconsistent folder naming, assembling that story is a project in itself. We've seen it take weeks. We've seen it surface gaps that required additional experiments to fill.

The framework: five structural decisions that determine whether traceability holds

Multi-program traceability isn't primarily a technology problem. It's a design problem. The labs that maintain clean traceability across multiple programs have made specific structural decisions — usually early — that create the right conditions for data to stay connected as complexity grows. Here's what those decisions look like.

Build a program-aware data architecture from the start

The most foundational decision is whether your data system has a native concept of "program" as an organizing layer. Not a folder. Not a naming convention. A structural layer that sits above experiments and samples, that can be used to filter, segregate, and query across the entire dataset.

In practice, this means that every sample, every experiment, and every material consumption record is tagged to a program at the time of creation — not assigned to a folder later. When you need to pull everything associated with Program B for a tech transfer package, it's a query, not a search. When a material lot is used across Programs A and C, both uses are visible from the lot record without cross-referencing separate systems.

Programs organized as folders in a shared drive or as separate spreadsheet tabs. Cross-program queries require manual aggregation. Material usage across programs invisible without asking around.

Programs as a native data layer. Every record tagged at creation. Cross-program queries run in seconds. Shared material usage automatically visible across all programs that consumed it.

Standardize experiment structure across programs, not within them

This is where most multi-program labs get the level of abstraction wrong. They standardize within each program — Program A has consistent upstream records, Program B has its own consistent structure — but the structures across programs are different. That's better than nothing, but it still makes cross-program analysis impossible and makes onboarding scientists onto new programs a relearning exercise every time.

The right level of standardization is at the process stage level, applied uniformly across all programs. An upstream bioreactor run record should look the same whether it's for Program A, Program B, or a new program that launches next quarter. The program-specific content goes in the designated fields. The structural skeleton — required parameters, outcome fields, deviation notation format — stays constant across programs.

Getting this right requires some upfront conversation between program leads. There will be resistance — "our upstream process is different from theirs." That's true at the parameter level. It shouldn't be true at the structural level. Push through the resistance. The payoff in cross-program comparability and scientist mobility is significant.

Enforce lot-level material tracking across all programs simultaneously

In multi-program environments, shared reagents and materials are the highest traceability risk. When a buffer lot, an enzyme preparation, or a cell bank derivative is used across three programs, and a quality event occurs, the investigation needs to trace that lot's usage across all programs — not just the one where the problem was observed.

The only way this works is if lot-level tracking is enforced at the point of use, not recorded after the fact. "The scientist will note the lot number in their notebook" is not a system. It's an aspiration. In a busy lab running multiple parallel programs, it's an aspiration that fails regularly — not because scientists are careless, but because the cognitive load of context-switching between programs makes manual tracking unreliable.

Systems that link material consumption to experiment records automatically — pulling lot information from inventory at the time the sample is logged into a run, rather than expecting a scientist to transcribe it — produce consistently complete traceability records. The difference isn't discipline. It's architecture.

A shared reagent quality event with incomplete cross-program lot tracking doesn't just affect the program where the problem was found. It creates potential scope uncertainty across every program that might have used the same lot — leading to either over-investigation (expensive and disruptive) or under-investigation (a regulatory liability). Lot-level traceability across programs isn't overhead. It's risk containment.

Build tech transfer readiness into the data structure, not the process

Most teams think about tech transfer as something that happens at the end of process development — a packaging exercise where you assemble the history and hand it over. That framing creates the three-week data assembly projects described earlier. The better framing is this: every experiment record you create is a future element of a tech transfer package. Design the records accordingly.

In practical terms, this means that the data structure of your process development records should map, from the beginning, to the structure of the development report you'll eventually need to produce. The parameters you'll need to justify, the ranges you'll need to demonstrate, the material inputs you'll need to trace — those requirements are knowable in advance. Building experiment templates that capture them consistently from run one means the tech transfer package is assembled, essentially, as a query — not reconstructed from fragments.

Labs that do this well describe tech transfer as "pulling a report" rather than "writing a history." The difference in effort is substantial. The difference in accuracy — because the data was structured from the start rather than interpreted retrospectively — is arguably more important.

A process development team at a mid-stage biotech described their most recent tech transfer preparation as taking four days — including review. Their previous transfer, before implementing structured templates and program-level data architecture, took three weeks with two people. The science hadn't changed. The infrastructure had.

Separate program data access without fragmenting the data system

Multi-program labs increasingly work with external partners — CROs, CDMOs, licensing collaborators — who need access to specific program data without visibility into other programs. Managing this through separate systems or separate physical databases is the instinctive solution, and it's usually the wrong one. Separate systems mean separate governance, separate data structures, and the same cross-program traceability problems described above, now amplified by an integration gap.

The better solution is program-level access controls within a single unified system. Scientists and partners are granted access to the programs relevant to their work. The data layer remains unified — meaning cross-program queries, shared material traceability, and portfolio-level visibility are all possible for those with appropriate access — but each program's data is protected from lateral visibility where that's required.

This is particularly important for CROs and CDMOs running multiple client programs on the same platform. Client data segregation is a contractual and competitive requirement. But it shouldn't come at the cost of the operational traceability benefits of a unified data architecture.

A diagnostic: where does your multi-program traceability actually stand?

Before investing in new systems or workflows, it's worth being precise about where the gaps are. This table is a practical diagnostic — run through it honestly for your current environment.

| Capability | The question to ask | If the answer is "no" or "partially" |

|---|---|---|

| Program-level data architecture | Can you run a query that returns all experiments and samples across Program B, with no manual assembly? | Your programs are organized, not structured. Cross-program analysis will always require effort. |

| Cross-program experiment structure | Does an upstream fermentation record from Program A look structurally identical to one from Program C? | Convention drift is already in progress. It compounds with every new run and every new scientist. |

| Shared material lot traceability | For any shared reagent lot, can you list every experiment across all programs that consumed it — in under two minutes? | A shared material quality event will require manual reconstruction across programs. That's a scope and timeline risk. |

| Tech transfer readiness | Could you produce a structured development history for your most active program today, without a multi-week assembly project? | Your data is being collected, not structured. Tech transfer will require significant reconstruction effort. |

| Program-level access control | Can you grant a CRO or partner access to Program A data without any visibility into Program B or C? | Partner data segregation requires workarounds — separate systems or manual data extracts — that create their own traceability gaps. |

If two or more rows return "no" or "partially," the cumulative traceability risk across programs is significant — and it grows with every new program, every new scientist, and every shared material lot.

The honest part: this requires commitment before it delivers value

None of the structural decisions described above are free. Building program-level data architecture requires upfront design work. Standardizing experiment templates across programs requires alignment between program leads who may have strong opinions about how their data should look. Enforcing lot-level tracking requires changing habits that have been in place since the lab was small.

The payoff is real, but it's deferred. The labs that invest in this infrastructure at two programs don't fully appreciate the benefit until they're running four. By that point, the labs that didn't invest are spending weeks assembling data packages that take the structured labs hours to produce. They're running investigations with unbounded scope. They're onboarding scientists onto new programs from scratch because the data structures don't transfer.

The other honest thing: the right time to do this is before you feel the pain of not having done it. Multi-program traceability problems don't announce themselves clearly. They accumulate gradually — a slightly inconsistent record here, a lot number entered from memory there, a template deviation that gets copied forward into the next run. By the time the cost is obvious, the debt is significant.

How Genemod is built for multi-program process development

Genemod was designed with the multi-program reality of scaling biotech in mind. The architecture doesn't treat programs as folders or naming conventions — it treats them as a structural data layer, with all the querying, filtering, and access control capabilities that implies.

Process development teams running two, three, or five concurrent programs on Genemod work in a shared data architecture where every record is program-attributed, every shared material lot is traceable across all programs that consumed it, and every experiment record is structured by templates that are consistent across the portfolio. Tech transfer packages are reports. Quality investigations have bounded, queryable scope. New scientists onboard into a consistent structure regardless of which program they're joining.

- Program as a native data layer: every sample, experiment, and material record is program-attributed at creation — cross-program queries and program-level reporting are built in

- Portfolio-spanning templates: process-stage templates applied consistently across all programs — upstream, downstream, formulation, analytical — with program-specific fields where needed

- Lot-level traceability across programs: shared material consumption linked automatically from inventory to experiment records across all programs — no manual lot entry, no cross-program gaps

- Tech transfer-ready by design: structured experiment histories are queryable and exportable as coherent datasets — not assembled from fragments when transfer timelines arrive

- Program-level access controls: scientists and partners see only the programs relevant to their work — data segregation without fragmenting the underlying data architecture

- Scales without re-implementing: the same platform that manages two programs manages ten — no migration, no structural rebuild as the portfolio grows