A Practical Guide to Lab Scaling: What to Fix Before Your Team Doubles

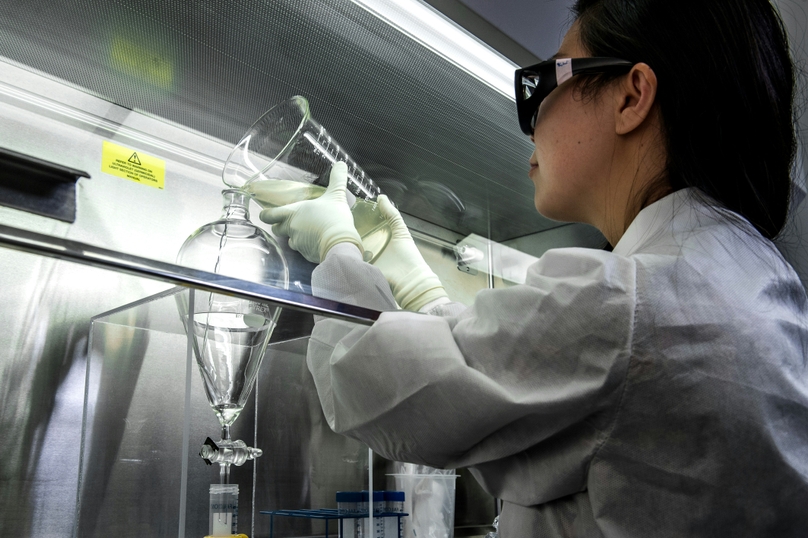

The operational problems that sink scaling biotech labs rarely appear overnight. They accumulate quietly—until the team is too big, the programs too many, and the data too messy to untangle without stopping everything else.

The scaling trap most labs walk into

Doubling a lab's headcount sounds like a straightforward problem—more people, more equipment, more space. In practice, it's a system stress test. Every informal convention that worked when five scientists shared one bench becomes a liability when fifteen scientists across three programs need to share data, hand off samples, and produce results that hold up under scrutiny.

The labs that scale smoothly aren't necessarily better funded or better staffed. They're the ones that fixed the right things early—before volume exposed the gaps. This guide is about identifying those gaps and addressing them before the pressure arrives.

Worth knowing: In most scaling failures, the science isn't the problem. The infrastructure around the science is. Data that can't be found, samples that can't be traced, workflows that depend on one person's memory—these are operational problems, and they have operational solutions.

What actually breaks when a biotech lab doubles

Based on where scaling labs consistently run into trouble, the failures cluster into six areas. None of them are surprising in hindsight. All of them are preventable.

At small scale, scientists remember which vial is which. Naming conventions are informal but shared because everyone's in the same room. When the team doubles, that shared context evaporates. New scientists use different ID formats. Splits and derivatives lose their lineage. The freezer becomes an archaeology project.

Notebooks that look different across scientists are fine when you only need to read your own work. They become a significant problem when someone needs to compare runs, onboard a new analyst, or hand off a project mid-stream. Inconsistent metadata means every cross-experiment analysis starts with manual cleanup—if it's possible at all.

In early-stage labs, critical knowledge lives in people. The senior scientist knows which protocol actually works, which lot number caused problems six months ago, and which instrument drifts in the afternoon. When that person leaves—or when the team grows faster than knowledge can transfer—the lab loses years of context it never formally captured.

Small teams coordinate by talking. That works up to a point. Past that point, verbal handoffs generate missed steps, duplicated work, and—most expensively—experiments run on samples that weren't ready, approved, or correctly characterized. The coordination cost of a doubling team grows faster than headcount.

Early labs often treat data access as a binary: everyone can see everything, or nothing is organized enough to need restrictions. Both create problems at scale. When programs multiply, partner relationships form, and regulatory timelines tighten, the absence of record-level access controls and audit trails becomes an active liability—not just a future concern.

Scaling labs accumulate tools. A spreadsheet for inventory. A shared drive for raw data. An ELN for experiment notes. A separate system for requests. Each tool seems reasonable in isolation. Together, they create a reconciliation burden that consumes hours every week and produces a data picture that's always slightly out of sync with reality.

A scaling readiness checklist: what to audit before you hire

| Area | What to Check | Ready to Scale? |

|---|---|---|

| Sample management | Consistent ID format, lineage tracked, storage mapped in a live system | If yes to all three → ready |

| Experiment documentation | Templates in use, metadata fields consistent across scientists, cross-run queries possible | If not templated → fix first |

| Institutional knowledge | Protocols versioned, deviations logged in records, critical context not dependent on one person | Key person risk → address now |

| Workflow handoffs | Requests tracked, approvals documented, status visible without asking around | If managed via Slack → not ready |

| Governance | Audit trails automatic, access controls in place, deviation log exists | If bolt-on or absent → fix first |

| Tool consolidation | Inventory, ELN, and workflow in one connected system—not reconciled manually | If 3+ disconnected tools → consolidate |

Run through this checklist at your current team size. Every "not ready" is a problem that grows proportionally with headcount. Fixing it now costs a fraction of fixing it later.

How the pressure changes at different stages

Not every scaling challenge hits at the same time. The problems that surface going from 5 to 15 people are different from the ones that emerge going from 15 to 40. Understanding the progression helps labs prioritize what to address first.

5 → 15 people

Sample identity and experiment consistency break first. Informal conventions stop working. Onboarding becomes a recurring time sink. Focus: standardization and templates.

15 → 30 people

Handoff failures, duplicated work, and data silos emerge. Multiple programs create cross-team coordination gaps. Focus: workflow structure and unified data access.

30 → 60+ people

Governance and compliance requirements become non-optional. Partner and regulatory interactions demand audit-ready records. Focus: access controls, traceability, and GMP readiness.

Any stage: team turnover

Key person departures expose knowledge gaps at any size. Institutional memory stored in people—not systems—is a scaling risk that doesn't wait for headcount thresholds.

How Genemod is built for labs that are scaling

Most lab management software is designed for a specific team size or a specific set of workflows. Genemod is designed for the full arc—from early-stage operations to IND-enabling work and beyond—without requiring teams to migrate or re-implement as complexity grows.

The architecture is built around the problems that actually cause scaling failures. Sample records are lifecycle-aware and linked to experiments natively—not through an integration that requires maintenance. Experiment templates enforce the metadata consistency that makes cross-run analysis possible. Audit trails and access controls are defaults, not add-ons. And the workflow layer—requests, approvals, handoffs—lives in the same platform as inventory and documentation, eliminating the reconciliation overhead that fragmented tools impose.

For labs at the inflection point—past the early stage, not yet at scale—Genemod provides the operational infrastructure to grow through that transition without losing control of data, knowledge, or governance.

- Unified LIMS + ELN: sample records and experiment records connected at the data layer—not reconciled manually across two systems

- Enforced metadata and templates: every scientist captures comparable data from day one, regardless of prior experience with the platform

- Lifecycle-aware inventory: lineage, status, location, and usage history preserved automatically as programs grow

- Audit-native architecture: change history, operator logs, and access controls are built in—active from the first record, not configured later

- Workflow layer built in: requests, approvals, and handoffs tracked in the same system as samples and experiments—no parallel tools required

- Progressive governance: GMP-readiness and Part 11-compatible workflows activate as requirements evolve, without a platform migration

Bottom line: The labs that scale without breaking aren't the ones that move slowest or plan longest. They're the ones that fix the right operational foundations before the volume arrives. Genemod is built to be that foundation — deployable early, durable at scale, and designed so the data your lab generates today is still traceable, queryable, and audit-ready years from now.