What Makes a Good LIMS in 2026: The Criteria That Actually Matter for Scaling Biotech Labs

LIMS evaluation hasn't kept up with how biotech labs actually operate. Most criteria lists are still written for what labs needed ten years ago. Here's what to actually look for — and why the difference matters more than most teams realize before they've signed a contract.

Why most LIMS evaluations miss the point

The standard LIMS evaluation process goes roughly like this: compile a feature checklist, send it to vendors, score the responses, schedule demos, pick the highest scorer. It's a reasonable process for procurement. It's a poor process for selecting operational infrastructure.

The problem is that feature checklists evaluate what a system can do in isolation. They don't evaluate how a system behaves under the specific pressures a scaling biotech lab will actually face: three programs running in parallel, a team that doubled in 18 months, a regulatory timeline that moved up, a data science team asking why the experimental data isn't analysis-ready. A LIMS that checks every box on a feature list can still fail badly at all of those things.

The criteria that matter in 2026 aren't about features. They're about architecture, defaults, and operational fit. A system's default behavior — what it does automatically without configuration — tells you more about how it will perform under pressure than any feature demo.

The most expensive LIMS mistakes aren't made by labs that chose obviously bad systems. They're made by labs that chose systems that looked good in demos but whose defaults, architecture, and ceiling didn't match where the lab was heading. The gap usually becomes visible around 18 months after go-live — when reconfiguring or migrating is significantly more disruptive than it would have been upfront.

How the criteria have shifted

The LIMS category was built around a specific set of problems — primarily in clinical, environmental, and quality testing environments. Sample custody. Chain of custody. Test result recording. Instrument integration. Those requirements haven't gone away, but for biotech R&D labs in 2026, they're a starting point rather than a complete picture.

- Sample location tracking

- Barcode and label support

- Basic instrument integration

- Test result recording

- Chain of custody documentation

- Report generation

- Native ELN integration — same data layer

- Structured, queryable metadata by default

- Lifecycle-aware sample management

- Cross-program traceability at scale

- Governance as default, not add-on

- API depth for AI and analytics pipelines

The shift isn't about abandoning the old requirements. It's about recognizing that biotech R&D labs operate in a fundamentally more connected, data-intensive, and regulation-adjacent environment than the context most LIMS platforms were designed for. Evaluating a 2026 lab on 2015 criteria produces a 2015 answer.

The eight criteria that actually matter

Native ELN integration — not a bolt-on or an integration

This is the single most important architectural question in a LIMS evaluation, and it's the one most teams ask too late. A LIMS that manages samples in one system and relies on an ELN integration to connect them to experiments is not a unified platform — it's two platforms with a data pipe between them. That pipe requires maintenance, breaks when either platform updates, and produces a data model where the connection between a sample and the experiment that consumed it is as strong as the weakest link in the integration.

Native integration means the sample record and the experiment record exist in the same data layer. When a scientist consumes a sample in an experiment, the connection is structural — the lot number, lineage, QC status, and storage history flow into the experiment record because they share the same database, not because an API call was successful. That's a fundamentally different operational and data integrity outcome.

Structured metadata enforcement — by default, not by discipline

A LIMS that allows free-text entry for critical fields is not enforcing data quality — it's delegating it to individual scientists. In a lab with ten scientists and three programs, delegation produces ten slightly different data structures. Cross-program analysis requires cleanup before it can start. Onboarding a new scientist means teaching them the informal conventions, because the system doesn't enforce the formal ones.

Structured metadata means typed fields — numeric, date, dropdown, text with validation — that are required at the point of entry and consistent across every record of the same type. It means templates that define what a fermentation run record must contain, and make it impossible to submit an incomplete one. The goal is not bureaucracy. The goal is data that's queryable by default rather than queryable after cleanup.

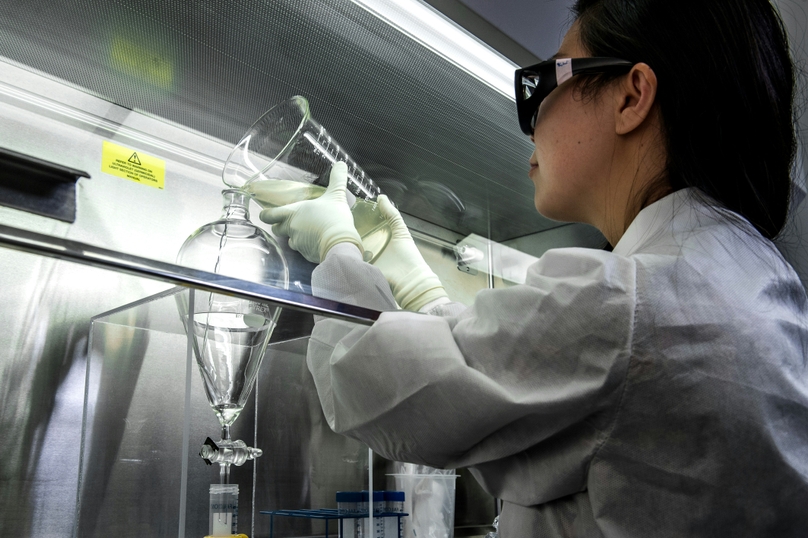

Lifecycle-aware sample management — beyond location

Location is the minimum. A LIMS that tells you where a sample is stored is solving the least complex part of the problem. What labs at scale actually need is a system that tracks the full lifecycle: where a sample came from, what QC status it carries, what it has been used for, how much remains, what has been derived from it, and whether it's still fit for its intended purpose.

Lifecycle-aware management means status is live and centralized — a QC hold applied in one part of the system is immediately visible to every scientist who might pull that sample. It means lineage is structural — splits and derivatives are linked to their parents at creation, not documented retrospectively. And it means consumption is an event with context — not just a volume decrement, but a record of which experiment consumed it and under what conditions.

Cross-program traceability at scale

Single-program labs have a simpler traceability requirement than multi-program ones. When a shared reagent lot is used across three programs, the LIMS needs to trace that lot's usage across all three — not just within the program where a problem was observed. When a quality event occurs, the investigation's scope should be determined by data, not by asking around.

This requires a data architecture where programs are a native organizational layer — not folders, not naming convention prefixes — and where material lot usage is queryable across the entire portfolio simultaneously. It also requires that program-level data segregation (for CROs or labs with partner confidentiality requirements) doesn't come at the cost of cross-program traceability for internal use. Those two requirements can coexist, but only in a platform designed to support both.

Audit trails and governance as defaults — not tier features

The question to ask is not "does this LIMS support audit trails?" Every serious LIMS vendor will say yes. The question is whether audit trails are on by default from day one, covering all record types, or whether they're a feature that requires configuration, an enterprise tier, or a compliance module to activate.

The architectural difference matters. A platform where audit trails are native produces a complete, retroactive record from the first sample registered. A platform where they're configured later produces a record that starts from the configuration date — and everything before that date exists outside the governance envelope. For labs approaching IND-enabling work or GMP-adjacent operations, that gap has regulatory implications that are difficult to close after the fact.

GMP and Part 11 readiness — progressive, not a platform replacement

Most early-stage biotech labs don't need full 21 CFR Part 11 compliance on day one. The mistake is choosing a platform that can't grow into it — one that handles early-stage operations well but requires a system replacement when IND-enabling timelines tighten or manufacturing requirements emerge.

A LIMS with a progressive compliance pathway means that the features required for Part 11 — electronic signatures, controlled records, validated workflows, tamper-evident audit logs — are architecturally present from early stages and can be activated as requirements evolve, without rebuilding the data model or migrating historical records. The transition from research-mode to regulated-mode happens on the same platform.

Not "are you Part 11 compliant?" but "what does the transition look like for a current customer moving from early-stage to IND-enabling operations on your platform?" A vendor that can describe that transition specifically, with reference customers who've done it, is a different answer than one that defers to a roadmap.

API depth — structured data access, not just file export

In 2026, a LIMS that can't be queried programmatically at the record level is not AI-ready. It's archival. The difference matters because the value of lab data increasingly comes from what can be done with it downstream — predictive modeling, cross-study analysis, process optimization, digital twin development. All of those workflows require structured, accessible data. A system whose primary data export is a PDF or a flat CSV is a ceiling, not a foundation.

API depth means record-level access to sample data, experiment data, and metadata — not just file retrieval. It means endpoints that data science teams can query programmatically without writing custom scrapers. It means documentation that exists and is maintained. And it means reference customers who are actually using the API in production, not just running proofs of concept.

Deployment speed and total cost of ownership — not just license cost

Enterprise LIMS platforms can take six to eighteen months to deploy. For scaling biotech labs that need operational infrastructure now — not after a prolonged implementation cycle — that timeline is a real cost, not just an inconvenience. The months spent in implementation are months where data is accumulating in the old system, where new scientists are onboarding without structured infrastructure, and where the problems the LIMS was supposed to solve keep compounding.

Total cost of ownership for a LIMS includes license fees, implementation costs, ongoing configuration and administration overhead, and the cost of the next migration if the platform has a ceiling. A platform that deploys in weeks rather than months, that scientists can use without dedicated IT support, and that scales without structural changes has a meaningfully different TCO profile than one that doesn't — even if the headline license cost is similar.

A practical evaluation scorecard

Use this in vendor demos and RFP responses. The goal isn't to find a platform that scores perfectly — it's to surface the specific gaps early, before they become contractual commitments.

| Criterion | What good looks like | Red flag response |

|---|---|---|

| ELN integration | Same data model — no integration layer between sample and experiment records | "We integrate with leading ELN providers" or "our ELN module connects via API" |

| Metadata enforcement | Required fields by record type, typed inputs, submission blocked if incomplete | "Highly configurable" without specifying what's enforced by default |

| Lifecycle management | Live status, structural lineage, consumption linked to experiment context | Status updates require manual entry; lineage tracked in notes rather than data layer |

| Cross-program traceability | Programs as native data layer; lot usage queryable across portfolio simultaneously | Programs organized as folders or naming conventions; cross-program queries require manual assembly |

| Audit trails | Default on, all record types, from first record, no tier restriction | Audit trails available on enterprise tier or require configuration to activate |

| GMP readiness | Progressive activation on same platform; reference customers who've made the transition | GMP version is a separate product or requires significant re-implementation |

| API depth | Record-level access, documented endpoints, production reference customers | API covers file retrieval only; documentation is limited or in development |

| Deployment and TCO | Weeks to go-live; scientist-led adoption; scales without re-implementation | 6+ month implementation timeline; requires dedicated admin or IT ownership |

Red flag responses don't necessarily disqualify a vendor — but they should trigger specific follow-up questions and a realistic assessment of the configuration investment required to close the gap.

The question underneath all of this

Every criterion in this guide points back to the same underlying question: is this platform designed to help a lab run its operations, or designed to record what happened after the fact?

A system that enforces metadata, connects samples to experiments natively, keeps status live, traces across programs automatically, and logs governance events by default is helping the lab operate. A system that requires manual data entry at every junction, relies on integration layers for connectivity, and puts governance behind a configuration requirement is recording operations — imperfectly, with gaps, and with a data quality problem that compounds with every passing month.

In 2026, the distance between those two categories is widening. The labs that are moving fastest — and building data assets that hold their value through clinical development and beyond — are the ones that chose the former at the right moment.

Why Genemod is built for this standard

Genemod was designed around the criteria described in this guide — not as features added to a traditional LIMS, but as the foundational architecture of a platform built for modern biotech R&D. The LIMS and ELN share a single data layer. Metadata is enforced by template. Sample lifecycle is tracked natively. Audit trails are default. API access is at the record level. And the platform scales from early-stage through regulated operations without requiring a second implementation.

- Unified LIMS + ELN architecture: sample and experiment records in the same data model — no integration layer, no connectivity gaps

- Template-enforced structured metadata: typed, required fields by record type — consistent data from every scientist, from day one

- Lifecycle-aware sample management: live status, structural lineage, bidirectional experiment linkage — all in one record

- Cross-program traceability: programs as a native data layer — portfolio-level queries and lot traceability across all programs simultaneously

- Default-on governance: audit trails, access controls, and change history from the first record — no configuration required

- Progressive GMP readiness: compliance features activate as regulatory requirements evolve — on the same platform, without migration

- Record-level API access: structured data accessible programmatically — built for analytical and AI pipelines, not just file retrieval

- Fast deployment, low TCO: operational in weeks, not months — scientist-led adoption, no dedicated IT ownership required

A good LIMS in 2026 isn't the one with the longest feature list or the most recognizable name. It's the one whose architecture matches where your lab is heading — connecting data by default, enforcing structure without friction, and scaling through regulated environments without a platform replacement. Evaluate on architecture. The features will follow.